The ultimate storage shootout!

With years of building and testing servers in various configurations we have always suspected hardware RAID was not all that it’s cracked up to be.

FreeBSD’s GMIRROR and ZFS are great, but up until now it’s been a gut feeling combined with anecdotal evidence.

Today, we change that.

The Test Setup

The tests were administered on this system:

| Motherboard | Supermicro X9DRI-F |

|---|---|

| CPU | 2x Xeon E5-2620 |

| Memory | 32GB DDR-EEC |

| Hard Drives | 2x Toshiba MK1002TSKB (HDDs) |

| RAID Card | Supermicro SMC2108 |

Each test was run twice and the results averaged. The test procedure was:

1. Boot system using mfsbsd (10.2)

2. gpart destroy disk(s)

3. Partition using appropriate file system

4. Mount new file system and cd into that directory

5. Install bonnie++ benchmarking tool

6. Run bonnie++ (bonnie++ -u root -d .)

7. Record results.

It is important to note, along the lines of what Brendan Gregg says in his talk on performance analysis, that 100% of benchmarks are wrong. The tool we’re using (bonnie++) is designed to overload caches and other techniques to produce a “worst possible case” throughput figure. This is incredibly “unfair”, especially to things like ZFS, where it’s ARC and LZ4 compression show massive real world performance increases.

Additionally due to how LZ4 can skew benchmarking data that is not properly randomized we left it off in our tests but in the real world we highly recommend it under almost all situations including DB servers. The bottom line is that ZFS is likely to perform better than these tests reveal under real world situations.

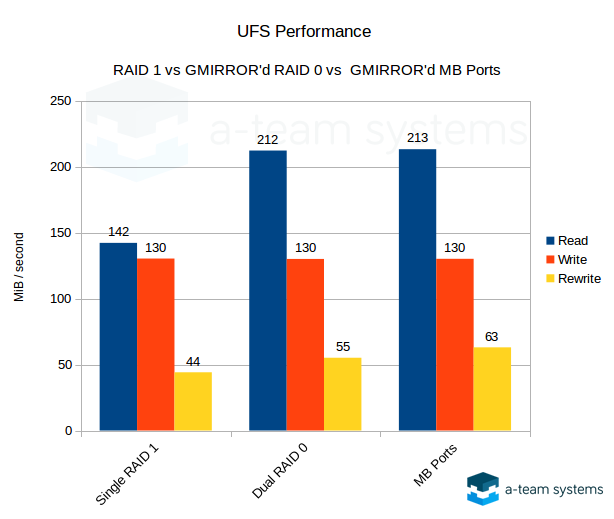

UFS RAID 1 (Mirror) Performance

In these tests we used a UFS (FreeBSD’s native filesystem) volume on top of the following block storage providers:

- Single RAID 1: A hardware RAID controller configured for RAID 1, presenting a single volume to the OS.

- Dual RAID 0: A hardware RAID controller configured for two RAID 0s. This is essentially a JBOD configuration (no RAID performed by the card), and in this case should perform about the same as the motherboard ports. However some cheaper RAID cards have poor performance when doing this so be warned. The two volumes presented to the OS are then combined into a software RAID 1 using FreeBSD GMIRROR.

- MB Ports: Disks are directly attached using the SATA ports on the motherboard. The two disks are then combined into a software RAID 1 using FreeBSD GMIRROR.

As you can see here hardware RAID loses across the board. GMIRROR clearly does a much better job of utilizing both disks for reads and it shows, regardless of motherboard or RAID card ports are used.

Results: With UFS hardware RAID is not only a waste of money, it’s also almost 50% slower for reads.

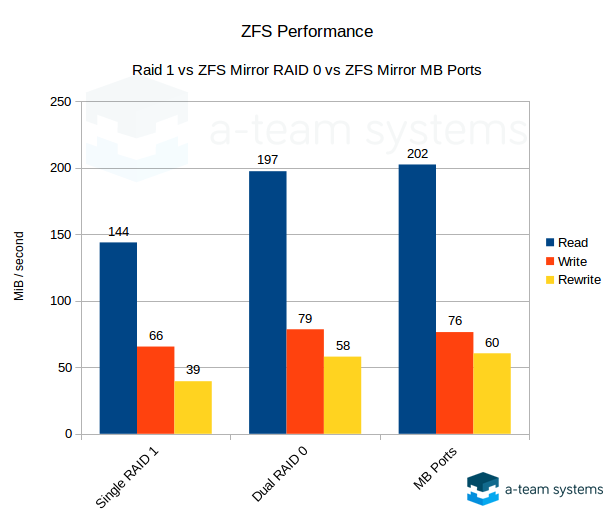

ZFS Performance

In these tests we used ZFS in one of the following configurations:

- Single RAID 1: A hardware RAID controller configured for RAID 1, presenting a single volume to the OS, with ZFS only seeing it as a single disk.

- Dual RAID 0: A hardware RAID controller configured for two RAID 0s. This essentially a JBOD configuration (no RAID performed by the card), and in this case should perform about the same as the motherboard ports. However some cheaper RAID cards have poor performance when doing this, so be warned. The two volumes presented to the OS are then combined into a ZFS mirror.

- MB Ports: Disks are directly attached using the SATA ports on the motherboard. The two disks are then combined into a ZFS mirror.

Results: ZFS on top of a hardware RAID 1 was never a great idea, but this shows it clearly. Again it’s a waste of money and it’s 37% slower. You can also see that ZFS has slower writes than UFS, due to it’s verification, checksuming and the nature of COW (copy on write) filesystems.

Conclusions

With these tests we conclusively show that, for read operations, hardware RAID 1 is slower. At best it is a little slower and at worst a lot slower than both a ZFS mirror or UFS on top of a GMIRROR provider. For write operations ZFS performs slower but you gain peace of mind knowing the data you’re reading has been verified.

Here is what we’ve taken away from these results: Hardware RAID cards should only be used if you need a large volume of SATA/SAS ports and your motherboard does not have enough (and a few dual port PCIe cards won’t help enough). In this case the card should be configured as JBOD or one-RAID-0-per-disk. Do not use them for actual RAID functions, just as a way of getting more SATA or SAS ports. You’d then use GMIRROR+UFS or ZFS on top of this.

Hardware RAID cards also have lock in: You can’t move disks between RAID card manufacturers and sometimes even between models of the same manufacturer. This means you can’t just move the disks to another server if your card fails.

With that in mind, best practice storage under FreeBSD comes down to choosing between either GMIRROR+UFS or ZFS. Both filesystems are very mature under FreeBSD and are well supported. UFS is a rock, and FreeBSD is a Tier 1 OS for ZFS support. Both have near-comparable read speeds.

Let’s review some of the differences however:

UFS /w GMIRROR

Pros:

- High write performance.

- Traditional FreeBSD backup tools like dump(8) and restore(8) work.

Cons:

- No immediate verification, just like hardware RAID 1.

- Rebuilding the array (disk replacement) involves reading and writing every block on the disk, regardless of it being used.

- No compression.

- No automatic repair/recovery of bad blocks.

- Creating a GMIRROR with more than two disks does not result in more usable space and write performance will remain the same.

ZFS Mirror

Pros:

- All data is verified that it was written to disk properly when you go to read it, and corrected if not.

- Highly robust snapshots and other tools.

- LZ4 compression has virtually no down side or overhead and provides explosively-fast performance for compressible workloads such as DB, NAS and virtual machine disks.

- Rebuilding (disk replacement) only needs to write actual used data. Unless the array is 100% full this will save a lot of time and reduce performance degradation windows.

- ARC (cache) is highly adaptive and intelligent. It can use as much free RAM as available making it ideal for NAS servers as well as application or DB servers (where it can be limited).

- SSDs can be used for the ZIL to increase write performance in situations like using ZFS/NFS for ESXi storage.

- Additional RAIDZ levels are available and will increase performance and available disk space.

Cons:

- Slower write performance due to verification/checksumming and the nature of COW (copy on write) filesystems.

So … Which is Better?

It really comes down to your write load, data size, and budget. Here is a simplistic summary of our feelings:

If you don’t foresee constant, intensive (50+ MiB/sec) writing, ZFS (especially with compression enabled) is going to be better.

If you will see intensive writes, still stick with ZFS but switch to SSDs. They will greatly reduce the overhead that ZFS’ checksumming has vs. traditional drives (and everything will be a lot faster, too).

If your data set is too large that SSDs are not cost effective UFS is still a great choice.

What about RAID5?

While not tested here, we surmise that ZFS using RAIDZ1 and RAIDZ2 is going to be better than hardware RAID-5 for the same reasons that it is better than hardware RAID 1.

Need help with Linux or FreeBSD infrastructure?

A-Team Systems provides engineer-led support for production Linux and FreeBSD environments, including troubleshooting, operational oversight, and ongoing infrastructure management.

Contact A-Team Systems